Why keyword density isn’t (and is) important.

Among the SEO creme de la creme, keyword density is a phrase not even worth re-hashing, but for many, it remains a mainstay in their SEO lexicon. Obviously, I’ve run my own experiments and seen my own successes in the past with upping my keyword density, which is why I’ve lauded its success, despite “knowing better”. I’ve always had some knowledge of term vector theory, and had an understanding at only the highest of levels, but never actually sat down and tried to walk my math-retired mind through it to truly get it.

Disclaimer: I do not claim to be an expert on term vector theory. If you see inaccuracies, please feel free to comment or shoot me an email.

What I aim to do here is explain why so many SEO big shots (correctly in their circumstances) disregard keyword density, and then show why it can be beneficial in other circumstances.

First, I’m basing this on Salton’s Vector Space Model of information retrieval (or IR…you know, what search engines do). Basic elements of this model include:

- Term frequency

- Maximum term frequency in a document – the number of times the most frequent phrase (of the same length as the target phrase) appears in a document

- Document frequency

- Total documents

The equations of interest are:

- Term weight: term frequency/maximum term frequency in a document*log(total documents/document frequency)

- Inverse document frequency, which is just the second half of the term weight equation: log(total documents/document frequency)

Now the term weight is the article of interest from a ranking standpoint. If we assume this is a part of the search algorithm, we would like a high term weight (but much like the wheel on The Price Is Right, without going over). Keyword density is discounted in this formula as it’s only a ratio of one document, disregarding the surrounding environment, which this model takes into account.

Keyword density makes us feel warm and fuzzy because we can calculate it. But, in the grand scheme of things, that usually means very little. Why? Because in the term vector model, we’re dealing with unknowns. We do not know the exact document frequency, and we do not know the exact number of documents in the database. Further, if you look at the equation, the logarithmic function helps create the inverse document frequency, which helpts to normalize results. Let me explain this with a tangible example.

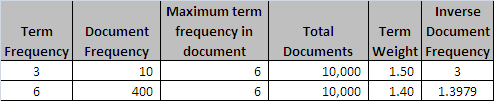

Let’s say we have two pages optimizing for the phrase “Netvantage Marketing”. The first page has a frequency of three for this phrase, while the other has a frequency of six. The maximum frequency for a two word phrase on each is six. Theoretically, one would think that the document with higher frequency of “Netvantage Marketing” would have a higher term weight, but that’s dependent on the other variables. The inverse document frequency is largely impacted by the number of documents containing the term, the document frequency.

As the true document frequency number, as well as the total documents are unknown (unless you’re willing to believe Google’s ever changing number of results), you’re really just shooting at a moving target. Here, the page with the term frequency of 3 actually has a higher term weight. So, to some extent, one cannot confidently say that a higher “keyword density” or term frequency will actually lead to better results.

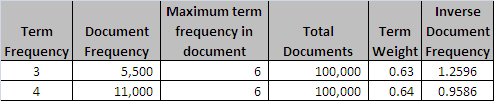

As you can ascertain, the more documents a term appears in, the more it’s marginalized, thus causing less impact when changing frequency.

So this is why people, particularly in competitive markets pay little or no attention to “keyword density” and instead focus on writing good content. The way to win in the SERPs for those markets will be through links.

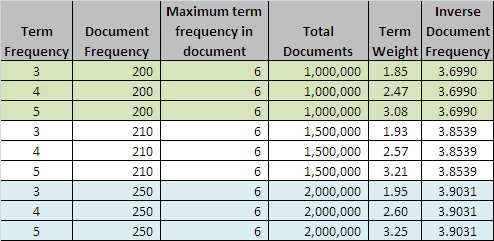

However, the more I dug into this, the more I realized that term vector theory and term weights actually validate the positive results that people claim to get (me being one of them) when it comes to increasing keyword density/frequency. In lesser competitive markets and when going after long tail terms, the math actually makes sense. Consider that in these markets the document frequency doesn’t fluctuate or grow at the rate of hyper-competitive markets. That said, changing the term frequency can have more of a dramatic impact, especially when considering competitor sites probably aren’t well optimized on page and probably don’t have great link strength. Check out this simplified example of this scenario:

If the environment doesn’t change much, the effect of the number of total documents has little effect, it’s the document frequency’s stagnation that allows for a (seemingly) sustained advantage in this non-competitive market. So, if you’re in such a market, by all means, fiddle with your keyword density/frequency, you might see some serious results through improving your term weight.

Now whether or not this is how search engines use term vector theory/term weight, I can’t say for sure, but hopefully this either sheds some light or starts a good conversation on the subject, as I’m still evolving my understanding.

Leave a Reply